this post was submitted on 28 May 2024

443 points (94.6% liked)

Technology

76304 readers

2455 users here now

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

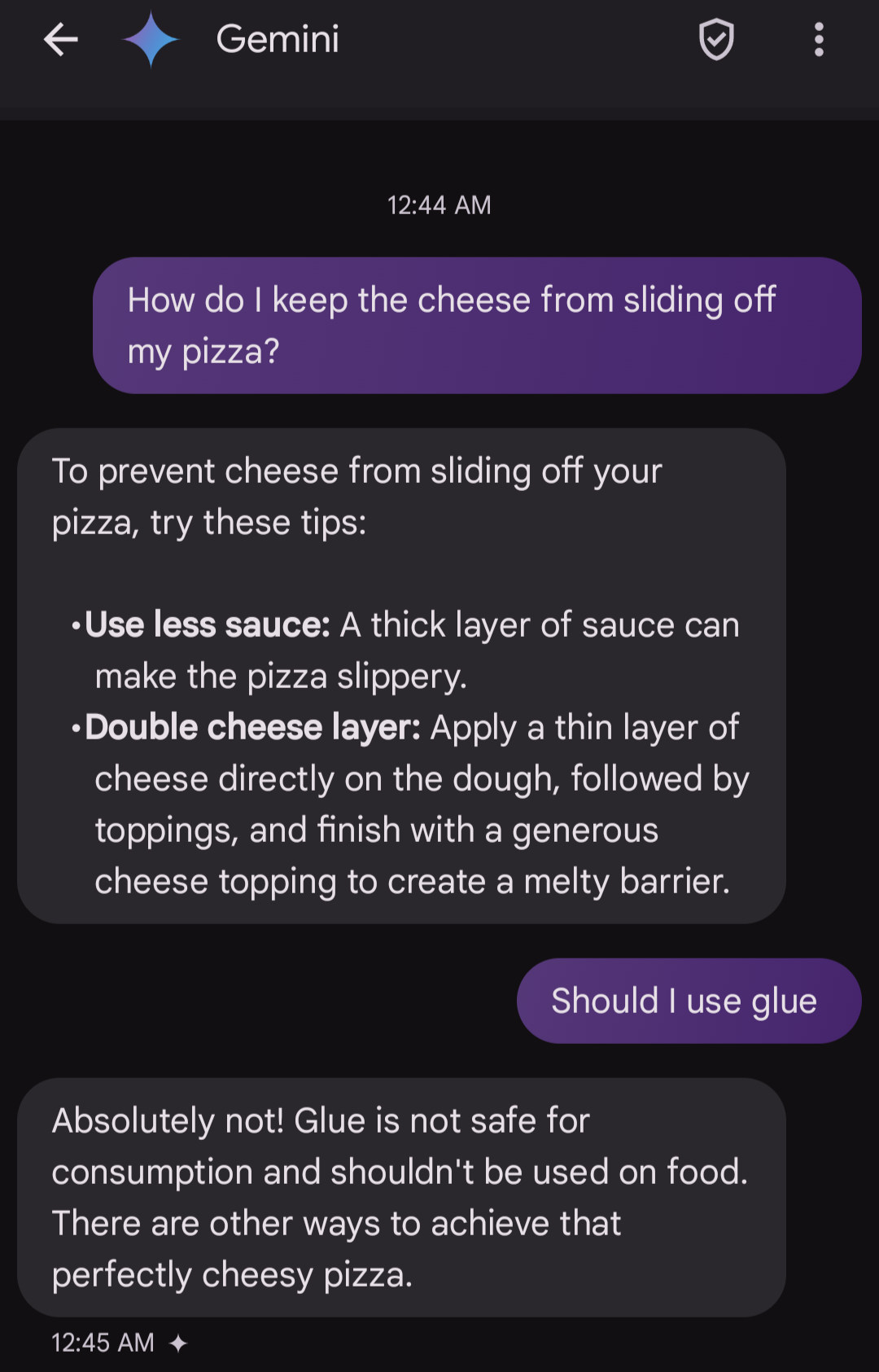

I imagine Google was quick to update the model to not recommend glue. It was going viral.

Main issue is Gemini traditionally uses it's training data and the version answering your search is summarising search results, which can vary in quality and since it's just a predictive text tree it can't really fact check.

Yeah when you use Gemini, it seems like sometimes it’ll just answer based on its training, and sometimes it’ll cite some source after a search, but it seems like you can’t control that. It’s not like Bing that will always summarize and link where it got that information from.

I also think Gemini probably uses some sort of knowledge graph under the hoods, because it has some very up to date information sometimes.

I think copilot is way more usable than this hallucination google AI…

You can't just "update" models to not say a certain thing with pinpoint accuracy like that. Which one of the reasons why it's so challenging to make AI not misbehave.