If I had a dollar for every time ChatGPT gave me code that worked but didn't do anything I asked it to, I would have $5. Not because its accurate, but because I only had the patience to correct its work 5 times.

Greentext

This is a place to share greentexts and witness the confounding life of Anon. If you're new to the Greentext community, think of it as a sort of zoo with Anon as the main attraction.

Be warned:

- Anon is often crazy.

- Anon is often depressed.

- Anon frequently shares thoughts that are immature, offensive, or incomprehensible.

If you find yourself getting angry (or god forbid, agreeing) with something Anon has said, you might be doing it wrong.

ChatGPT is really good at telling me I'm a dumbass and to do it some other way when I ask why my code isn't working. I'll give it credit for that.

After it gave me to implement X in your app, you need to take into account A, B, and C 5 times in a row when I asked it on what steps needed to be taken, I cancelled my sub immediately.

ChatGPT is getting fucking lazy.

Much depends on how you prompt. I have definitely encounters this but some basic default instructions around it can tweak it for any usecase.

When i prep a gpt i give it a list of information and steps it is supposed to follow and the further context about the job and my system. Stuff it can assume and what it should never assume.

Creating a good gpt and prompt are alot like programming itself, i can definitely see how if you just want to get some work done that having to do that feels like wasting time but you once a gpt is working its ready to be used any time.

every AI is getting bad. I'm using some off-brand (some might say indie) ones, and they're slower dumber and have more payed options by the day.

ChatGPT if not for cheating in school I wouldn't use it at all probly. Phind.com was my go-to favorite, now can't search the web for shit gpt option is payed and it's just dumb. You.com my friend used this, straight ignored my request yesterday.

do other's have the same experience? If yes, follow-up question are they dumbing down AI, and keeping the power to themselves? 2nd follow-up how can I selfhost a good AI and what do I need for it to work?

Look into ollama and mixtral variants... You'll be limited by things like GPU memory though.

Mixtral 8x7B seems fairly capable to me so far. It's just that I need to wait a few minutes for it to reply, given I'm running it on a 1st gen Ryzen...

Any models you'd reccommend that fit into 4GB of VRAM?

I've tried Deepseek Coder, and it certainly works well for quickly churning out bash scripts for whatever purpose I can possibly think of

Similar story here with an old 10xx GPU. I've just started tinkering with dolphin-mixtral-8x7b, but it's early days.

Dolphin is just peak amusement to me. I've asked so many weird things of it that it's basically just cowering in a corner now, afraid of answering any question for fear of getting another kitten killed.

what'd you say a good GPU is for it?

An nvidia card with as much memory as possible - the newer the better.

I'm also beginning to look into the dedicated accelerators like the coral, but at first wash, it looks like lack of onboard memory will be a massive bottleneck.

dang, I got an amd one. Should I even try? (16GB)

That I don't have any experience with - I hear it's harder, but not impossible.

Perplexity and poe are my go to for this.

I'll try them tho, already a + for perplexity, I don't need to log in

Yep that one my current fav.

Does chatgpt ever ask follow up questions to follow up on persistent problems? No, right?

I don't think I've ever had ChatGPT ask a followup question. It just kinda runs with what you gave it. Maybe if you don't tell it much of anything it will ask, but I usually supply it with everything it could possibly need.

Boilerplate that I know by heart but can't be giving bothered to type? Sure.

Context specific, unique manipulations? Nah, in going in raw

I can't speak for GPT, but I usually let copilot take a first pass at things, then when I start to tweak them to fix Copilot's mistakes, the AI peer-codes it out with me pretty quick.

I've actually managed to get copilot to offer a valid solution to a problem that was cleaner than my own (though it took a few tries).

Dev AI's work and save time as long as you know what you're doing and aren't trying to lean on the AI to do all the coding.

I had a similar experience.

You know what's nice tho? Vscode copilot.

It gives auto complete suggestions like many systems already do. But it also learns from your script and suggests code in your style.

Even ignoring bad suggestions it's helped me a ton and paid for itself. Worth the $10.

My coworker asked for a script and I made it in an hour for him. Dude tried to call me out saying I had it already. Looks like at least a days worth of work.

"Nope. AI baby."

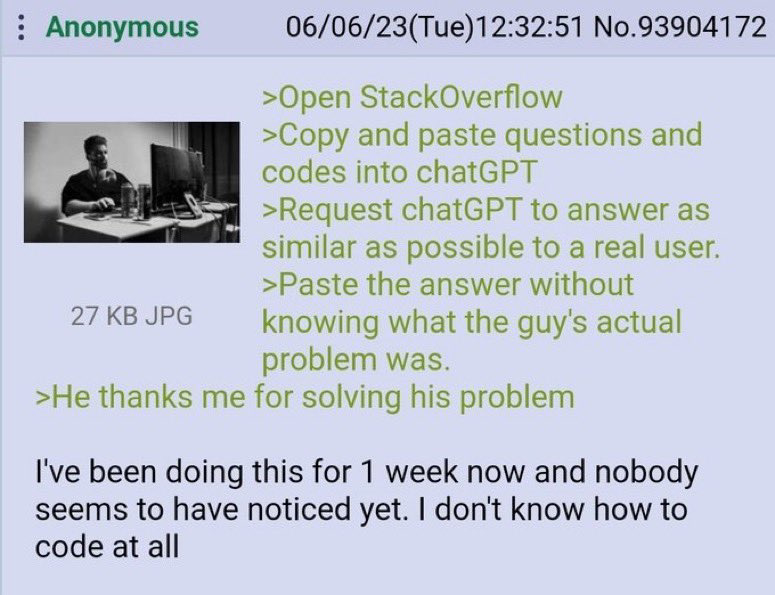

At least anon didn't downvote an question because he found that question "similar" to another "solved" question in stackoverflow.

Most questions on SO these days are very specific so I doubt ChatGPT would be able to come up with good answers for those. All the easy questions have been answered long ago.

Especially since ChatGPT can't think of a new answer, right? It's working off data that's already somewhere online. It's just using predictive text based to determine the next word based on what users have typed. So most of these answers people get from "AI" are out there for these people to get from real people.

I don't know why you're getting down voted. That is how it works to my understanding (as a layperson). It was fed training data and is very good at predictive text. I don't think it can take concepts it's learned and apply them in novel ways.

This is hilarious but I don't think fully answers the question. This is a good example of something novel that GPT can do, ie manipulating language according to new rules to create rhythm and rhymes.

However, to give a more over the top example: if you removed all mention of planes from its corpus, leaving only information on air resistance and materials science, and then asked it for the best way to cross the Atlantic, it would never invent a plane for you.

Even if it could, there are a lot of APIs or documentation that it hasn't been trained on enough or at all to be able to answer. The models can, at least currently, only contain so much information, so the more specific or detailed the response you need, the worse it'll do.

Deciding what to write next based on what it just wrote is reasoning. So saying "it's just predicting the next word" is very dismissive if you haven't used it.

My personal experience was I spent hours googling a for a script. I gave up and typed my problem into chatgpt. It gave working code in seconds.

It wasn't just cutting and pasting what was already on Google.

I swear, uninformed people who underestimate AI will be the death of us

Good thing every single programming line is already documented somewhere.

It doesn't need to think of new answers.

I disagree. I use chatgpt all the time where I'll tell it "here's my block of code" then "here's the error message I'm getting, how should I resolve this?" I could easily see it working for stack exchange questions. Chatgpt is useful because it's able to answer specific questions.

Of course there is some percentage of the time where it's completely wrong, but I'd put that under 20% for the questions I ask it. And you can tell it's wrong because the solution doesn't work, but if I'm not familiar with the subject matter I could waste a lot of time before I figure out why it's wrong.

Which is probably how chatgpt learned to code in the first place.

No probably about it.

You haven't learned to add "probably" when you're sure of something on lemmy?

Probably not.

If you look at new questions asked, there are a lot of easy to answer, low quality questions.

Didn't GPT pull in StackOverflow as a source? Questions are probably repeats of existing or close enough.

As bad an idea as that would be, if real, it's still hard to underperform relative to SO.

Closed ss duplicate "link to completely unrelated questionr"

Recursion INFINITE