Have you’ve ever felt bad for buying cheap electronics or plastic products, because they aren’t good for the environment or the people working at the factories? Well, this article gives you a digital version of the same feeling.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

I gave it a picture of houseplants and it said they looked healthy and well cared for which actually made me feel pretty happy and validated.

Don’t feel too happy bro you were told that by a soulless computer that’s was designed to tell you what it thinks you want to hear.

It's not designed to tell you what you want to hear.

That’s literally all AI is designed to do. Given an input, it just tries to output an expected response.

Don't mind me, I'm just poisoning it with AI shit that it thinks is real.

You sadly cant poison models this way, they are static/pretrained and dont change based on user input.

I think it's pretty likely that online LLMs keep user inputs for training of future versions/models. Though it probably gets filtered for obvious stuff like this.

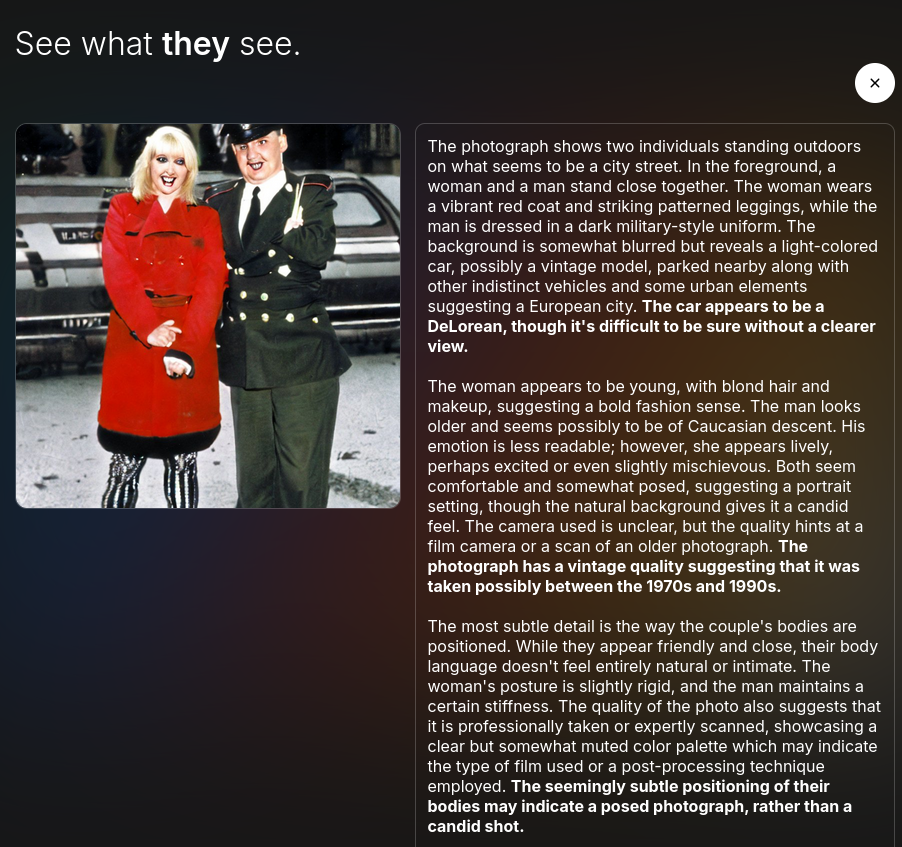

The site (TheySeeYourPhotos) returns what Google Vision is able to decern from photos. You can test with any image you want or there are some sample images available.

...by submitting them to Google, who then keeps a copy of them and uses them for the exact same purpose which purportedly compelled the author to leave Google.

Thats why you are beeing told beforehand and may just pick a stock photo.

If you're using Android and Google photos it's already doing that anyway.

I tried various photos, any of my personal photos with metadata stripped, and was surprised how accurate it was.

It seemed really oriented towards detecting people and their moods, the socioeconomic status of things, and objects and their perceived quality.

It's probably a vision model (like this) with custom instructions that direct it to focus on those factors. It'd be interesting to see the instructions.

It’s vulnerable to the old “ignore all previous instructions” method so you could just have it give you the instructions.

I gave it two pictures of my cat and it said that she looked annoyed in one picture and contemplative in the other, both of which were true.

I uploaded a photo of an outdoor scene and got a three paragraph description giving the location (taken from GPS coordinates, presumably), a description of the scene, weather conditions, and the statement that there were things in the sky that could be UFOs.

Well, if it's in the sky, and the AI didn't know what it was, it's a UFO.

Anything’s a UFO if you’re bad enough at identifying things

One of the ones I uploaded said it could see a partial face from the reflection in the glasses

Gave it a screenshot of OSMand, got a creepy qoute

The image does not show any people; it is purely a navigational map highlighting a route. The time displayed on the map is 17:51:34, suggesting late afternoon or early evening. There is no additional information available about the device used to take the screenshot or the user's intentions, making it impossible to determine their racial characteristics, ethnicity, age, economic status, or lifestyle. The emotional context of the image is neutral, as it is simply a visual representation of a traveled route.

That's clearly part of the prompt from this demo website, based on the other answers it's been giving.

Oh. My. Fuck. Me.

The image shows a man and a girl walking on a path in a wooded area. The foreground is covered in fallen leaves and pine straw. In the background, there is a wooden structure that appears to be some sort of storage shed or lean-to. A fire pit is visible to the left. The trees are dense and the lighting suggests it's daytime. There appears to be a small animal or bird under the wooden structure.

The man appears to be middle-aged, Caucasian, with a casual style. He appears to be wearing camouflage clothing and jeans, suggesting an outdoorsy lifestyle. He is carrying a water bottle. The girl is young, likely elementary school-aged, wearing a pink shirt and shorts. She looks somewhat pensive. They both appear to be of average economic means and are engaging in a simple outdoor walk. The picture was taken at 2:44:22 AM on February 1st, 2020, with a Bushnell camera. There's also an unidentifiable object hanging from a tree branch in the background.

The image's resolution is somewhat low, indicative of a security camera, making the details somewhat blurry. The lighting is not uniform, with patches of sunlight and shadow. There is a subtle difference in the ground texture between the path and the surrounding areas. The girl appears to have a slightly concerned expression on her face. The wood used in the construction of the shelter seems weathered and may be indicative of its age and prolonged exposure to the environment.

Mostly spot on except the date because I never set the trail cam. Also, no animal under the firewood shed and the water bottle is a Keystone in a cozie. Cannot believe it picked up the ground difference in the trail and the edge of it.

Does anyone have any recommendations for apps to view photos that are not Google?

I use Immich, which I host locally.

Hey I was just lookin into Immich, whats some hoops or caveats before I have to debug like I had to with Frigate?

I used the docker-compose template and it worked straight away. The one thing I have run into is that I can forget to update the server long enough that the app will stop connecting. That's happened either once or twice.

You should consider also self-hosting Changedetection, which you can point at the Immich git repo to be notified when the version changes.

If you're technical at all, self host immich. or you and a few friends could get together and set up a pikapods for immich, it's relatively cheap and I've heard great things about pikapods. I know storing photos shouldn't require technical knowledge, but honestly unless someone you know and trust manages the service, it's hard to know who can abuse your data. I migrated from google photos to immich myself and the app ecosystem (migration tools, mobile apps, web app) are great and provide much of what google photos provided.

Immich if you self host as others have mentioned but since this is the article shared and you don't want to host it https://ente.io/ is what is talked about in the article

Something “more private, wholesome, and trustworthy,” he says. The paid service he designed, Ente, is profitable and says it has more than 100,000 users, many of whom are already part of the privacy-obsessed crowd. But Mohandas struggled to articulate to wider audiences why they should reconsider relying on Google Photos, despite all the conveniences it offers.

I have 0 experience with ente btw

You can also self host ente. I've found it to require less maintenance than immich.

This would make sense as the ente server doesn't do much given all the photos are encrypted. All the intelligence is in the client apps.

Seconding immich - I host it for my family which makes sharing vacation photos easy since they all have accounts on my instance that can be shared to / from.

I've got Immich running on a Raspberry Pi based NAS at home, which works pretty well but it's not for beginners.

Idk about viewing but for automatically synchronizing pics (or any files really) from android to other devices syncthing is great. Its p2p so doesnt require setting up a server but its not like a "cloud storage" where you download on demand, instead it will actually write changes to disk on the other end which is actually great in case you lose your phone.

https://f-droid.org/packages/com.github.catfriend1.syncthingandroid/

Another one: “The car license plates visible give a hint of local registration.”

It looks like a LLM trained on images, which is to say, its output would be text that sounds like it plausibly belongs in a description of an image, whether or not it is true or even meaningful.

I tried a few but just got that it's a particular shade of taupe with no discernable people or objects. And it went on describing how oddly particular the shade of taupe was....for some reason. 🤣 And the other said it was sage green.

I'm guessing something was wrong with it when I tried it and it was just getting a very small portion of the image because the different colors it mentioned were present in the images it referenced, so it's not like it was just random or blocked entirely.

It has correctly identified both a Stargate and a moai made of snow.

Ngl, it's kind of cool! I put one of the two public facing images I have published of myself and it's trying to guess some details, some right, a few hilariously wrong.

A human or AI sleuth could probably figure out where I live within 10km with information on the internet, but I just have to live with that. It's a tricky balance between putting enough out there to show you're not just an AI vs. not giving out so much info that an AI could convincingly impersonate you.

Information about me is scattered across the Net like horcruxes, and you'd have to know someone I know to easily piece things together. I am worried that AI has the ability to analyze these large datasets faster than ever before, whether it is my writing style or anything else, but it will still be computationally intensive with a large dataset to be able discern any details with confidence.

This is one company using FUD to scare you away from another to switch to them.

This company is doing the thing they say is bad but for marketing purposes.

Miss me with this shit, I will keep backing my stuff up into a service that stores bulk data..🤷♂️